- Blog

- Zello app download

- Cardworks business card software free

- Microsoft dynamics pos 2009 hot keys

- What is teh best ide for python development

- Gta 5 mod menu ps4 2018

- Voicemod pro buy

- Sanako study 1200 9-0

- Nonstop flights from cmh

- Factoring trinomials worksheet not grouping

- Prison architect

- Minecraft mutant mod skydoesminecraft

- Solidworks 2012 key generator

- Izotope ozone 8 free download mac

- In darkness she is all i see

- Dona dona text

- Adobe acrobat login

- Eutron spa

- Shree swami samarth pictures

- Minecraft pocket edition mods android apk

- Gomez peer zone alternative

- Beyond two souls pc epic freezing

- Wxtoimg professional download

- Cst microwave studio for low frequencies edaboard

- Epiphone vs gibson es 125

- Nike beep test

- Parasite in city animated gifs

- Zelotes c12 mouse program

- Kodi theme music download mp3

- Descargar cross dj full

- Bootleg final fantasy 7 mod

- Lg firmware tool 2014

- Movavi video editor 14 pe

- Red orchestra 2 rising storm postet

- Elgato eyetv hybrid

- Team viewer free down load

- Context menu search for windows 10

- How to use photograv

- Asterix and cleopatra dress

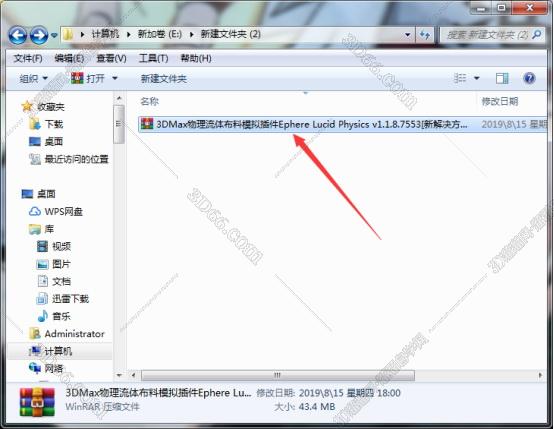

- Lucid physics v1-1-8-7553 for 3ds max 2011-2017

- Lord of chaos miniature

- Watch detective conan online 805

- Benjamin medwin caffe espresso parts list

Suppose the vectors q z t, k t, and v t are d q-, d k-, and d v-dimensional, respectively. The gated-attention vector is passed through LSTM ( Long Short-Term Memory) (Hochreiter and Schmidhuber, 1997) and splits in half into key k t and value v t. Meanwhile, the vector e t is inferenced through a gated-attention (Wu et al., 2018) with instruction I 3.3 Goal-discriminator by goal-aware cross-entropyĭuring the inference, the state encoding vector e t is passed through the first linear layer of the goal-discriminator, which yields the query vector q z t that implicitly represents the goal-relevant information. We clarify that the storage data does not serve as the prior during the learning and is instead used for training alongside the multi-target reinforcement learning task. The states for the failed episodes are also stored as a negative case with low probability ϵ N. This allows the agent to learn in an end-to-end, self-supervised manner. Rather than being manually provided the goal information, the agent actively gathers the data needed to learn the goals, relying only on the instruction I z ( s t ′, o n e _ h o t ( z ) ), called storage data. Throughout the training, the reached goal label z and the corresponding goal state s t ′ are automatically collected as a tuple We refer to the state s t ′ as a goal state, which we assume is highly correlated with the reward or the rewarding state. Suppose that the instruction I z specifies the target z as the goal and the episode ends when the goal is reached at time step t ′. , this section explains the automatic collection of goal data for self- supervised learning. Prior to describing the main method in Sec. Representation learning in reinforcement learning These tasks have a different purpose from our tasks, where we focus on having appropriate interactions with multiple targets depending on the instruction. Instruction-based tasks (Anderson et al., 2018 Shridhar et al., 2020) focus on learning to follow detailed instructions. However, these tasks require the agent only to search for visually similar locations or objects, rather than gaining semantic understanding of goals and promoting generalization. Scene-driven visual navigation tasks (Zhu et al., 2017 Devo et al., 2020 Mousavian et al., 2019) specify the goal by an image. ( 2019) aims to deal with multiple tasks, learning to reach different goal states for each task. There are subgoal generation methods that generate intermediate goals such as imagined goal (Nair et al., 2018 Florensa et al., 2018) or random goals Pardo et al. The terms targets and goals have been used in diverse manners in the existing literature.

Help the agent become aware of and focus on the given instruction clearly, Methods in terms of task success ratio, sample efficiency, and generalization.Īdditionally, qualitative analyses demonstrate that our proposed method can Multi-target environments and show that GDAN outperforms the state-of-the-art Proposed methods on visual navigation and robot arm manipulation tasks with Goal-relevant information to focus on the given instruction. We then devise goal-discriminative attention networks (GDAN) which utilize the

In this paper, we propose goal-awareĬross-entropy (GACE) loss, that can be utilized in a self-supervised way usingĪuto-labeled goal states alongside reinforcement learning. To solve this problem, it is important to be able to discriminate targets Targets requires a large amount of samples and makes generalization difficult. Learning in a multi-target environment without prior knowledge about the